Shocking! Stanley Milgram Faked Results From One of the Most Famous Experiments Ever

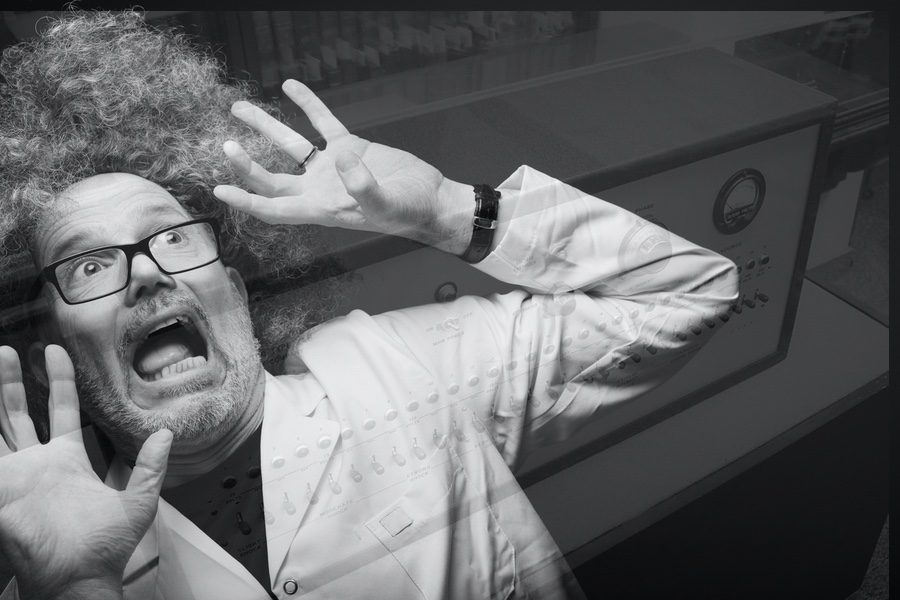

Milgram's fake "shock" box.

[Note: See follow-up below for one correction and some additional clarification.]

It’s one of the most famous and most influential psych experiments ever. Now we’re learning it was bogus.

Sixty-plus years ago, Yale University professor Stanley Milgram used a fake shock-torture setup to show that people are frighteningly easy to manipulate into doing as they’re told. One researcher described the setup as designed discover whether “ordinary Americans would obey immoral orders, as many Germans had done during the Nazi period.”

The answer Milgram gave that question was a disturbing yes. In his experiment he instructed subjects to deliver “shocks” to other persons when he told them to. A disturbing majority of the people complied with his voice of authority. In reality, of course, the person getting “shocked” was in on it. He only acted like he was getting shocked. As the fake shocks’ intensity went up, so did his acted-out screams of pain and agony.

Disturbing Yet Influential Results

Milgram said this showed that people here in America are frighteningly easy to persuade. They’ll even shock another human being into total agony, as long as someone in charge says they should do it.

The experiment was both dramatic and disturbing. It shocked the nation, and it ended up in every first-year psych textbook.

In 2002 Psychology Today summarized Milgram’s influence:

Those groundbreaking and controversial experiments have had — and continue to have — long-lasting significance. They demonstrated with jarring clarity that ordinary individuals could be induced to act destructively even in the absence of physical coercion, and humans need not be innately evil or aberrant to act in ways that are reprehensible and inhumane. While we would like to believe that when confronted with a moral dilemma we will act as our conscience dictates, Milgram’s obedience experiments teach us that in a concrete situation with powerful social constraints, our moral sense can easily be trampled.

We Should Have Doubted It All Along

And decades of students have believed it. I bought it myself, as a student. I should have known better. We’d all be hard-pressed to think of anyone we know who’d hurt someone that way, for no reason other than following orders.

Of course authority has its effects. Bosses, teachers, and “experts” can still persuade people to do wrong. In 2012, though, an Australian named Gina Perry published a book uncovering data Milgram had left out of his reports. It came from forms Milgram had had all his subjects fill out after it was over. He’d asked them there whether they’d actually believed they were delivering real electrical shocks to the “victim.”

The Result, With the Rest of the Data

Half of them guessed it was fake. They didn’t buy the setup. They knew it was a charade, so they went along with it. Those who thought it was real tended to be the ones who’d “defied” authority and pulled out of it.

Perry and a team of co-authors published a more formal follow-up to that book this summer. PsyPost reports her summary of the results:

“People who believed the learner was in pain were two and a half more times likely to defy the experimenter and refuse to give further shocks. We found that contrary to Milgram’s claims, the majority of subjects in the obedience experiments were defiant, and a significant reason for their refusal to continue was to spare the man pain.”

She went as far as saying it was time to revise the textbooks in light of this new data.

Lesson: We’re Free to Question

I could draw half a dozen lessons from this. I’ll stick with just one, though: Be cautious treating scientists as experts, especially when they speak on human matters. Scientists are as prone to bias as any other human. They’ve got their social networks to manage. They want to keep up their standing among their peers. And their grant funding, too. Many of them live in a one-sided opinion environment — the university — that’s dominated by leftist thinking.

So science isn’t always objective. Sadly, it isn’t even always honest. Yet too often people believe what they’re told science says, merely because it’s “science.” Well-grounded results? Sure. But again, when it comes to human matters, science is all too fallible. So we have the right to wonder and to question. We’re allowed to disagree, or at least withhold judgment, until sufficient evidence comes in.

Common sense can go wrong, certainly. Sometimes, though, it’s smarter than “research.”

Common sense can go wrong, certainly. Sometimes, though, it’s smarter than “research.” Common sense could have told any of us to go slow accepting Milgram’s results. Yes, most people tend to want to obey authority, but few of us would torture another human being just because a man in a lab coat told us to.

We tend to think of science as the authority. We see it as the source of wisdom, whose dictates we must obey. It’s ironic, though. Milgram warned us not to obey authority blindly, but to question it based on our own ethics, our own wisdom. That’s still good advice — especially when it’s “science” that wants to claim that authority.

Follow-Up, November 22

Based on feedback from a trusted friend who also has expertise in social psychology, I need to add the following correction and clarification:

My wording of “bogus” in the first sentence was careless and wrong, and “faked” in the headline was overstated if not also wrong. There’s little to no reason to think Milgram intended his experiment as anything other than legitimate, honest science. It remains true that he omitted important, results-altering information from his analysis, but people do things for different reasons and with different motivations. Hindsight should be cautious judging a scientist sixty years after the fact for not seeing what we see more clearly now.

Also, I would not want anyone to think Perry’s new information completely overthrows Milgram’s conclusions. Other valid research still shows that persons can too easily be swayed by authority. Her updated analysis does reduce the significance of his work, however, and it puts serious doubt on his most disturbing conclusion: that many of us will harm others simply because someone in authority tells us to.

What-ifs are notoriously hard to get right. In my view, though, it’s likely that had Milgram included the same full information in his analysis, his experiments would never have achieved anything like the long-lasting iconic status and influence they’ve held all these years.

I stand by the lesson I’ve drawn from this: that we are free to question when social science contradicts common sense.

Tom Gilson (@TomGilsonAuthor) is a senior editor with The Stream, and the author of A Christian Mind: Thoughts on Life and Truth in Jesus Christ and Critical Conversations: A Christian Parent’s Guide to Discussing Homosexuality with Teens, and the lead editor of True Reason: Confronting the Irrationality of the New Atheism.